Going to Production

Going to production checklist for AI applications.

This guide will help you to prepare your application for production. We'll provide actionable steps to help you scale your application, ensure that it is reliable, can handle the load, and provide optimal precision for your use case.

See our Engineering for Scale guide for more information about engineering at scale.

Do you need indexes?#

Sequential scans will result in significantly higher latencies and lower throughput, guaranteeing 100% precision and not being RAM bound.

There are a couple of cases where you might not need indexes:

- You have a small dataset and don't need to scale it.

- You are not expecting high amounts of vector search queries per second.

- You need to guarantee 100% precision.

You don't have to create indexes in these cases and can use sequential scans instead. This type of workload will not be RAM bound and will not require any additional resources but will result in higher latencies and lower throughput. Extra CPU cores may help to improve queries per second, but it will not help to improve latency.

On the other hand, if you need to scale your application, you will need to create indexes. This will result in lower latencies and higher throughput, but will require additional RAM to make use of Postgres Caching. Also, using indexes will result in lower precision, since you are replacing exact (KNN) search with approximate (ANN) search.

Understanding probes and lists#

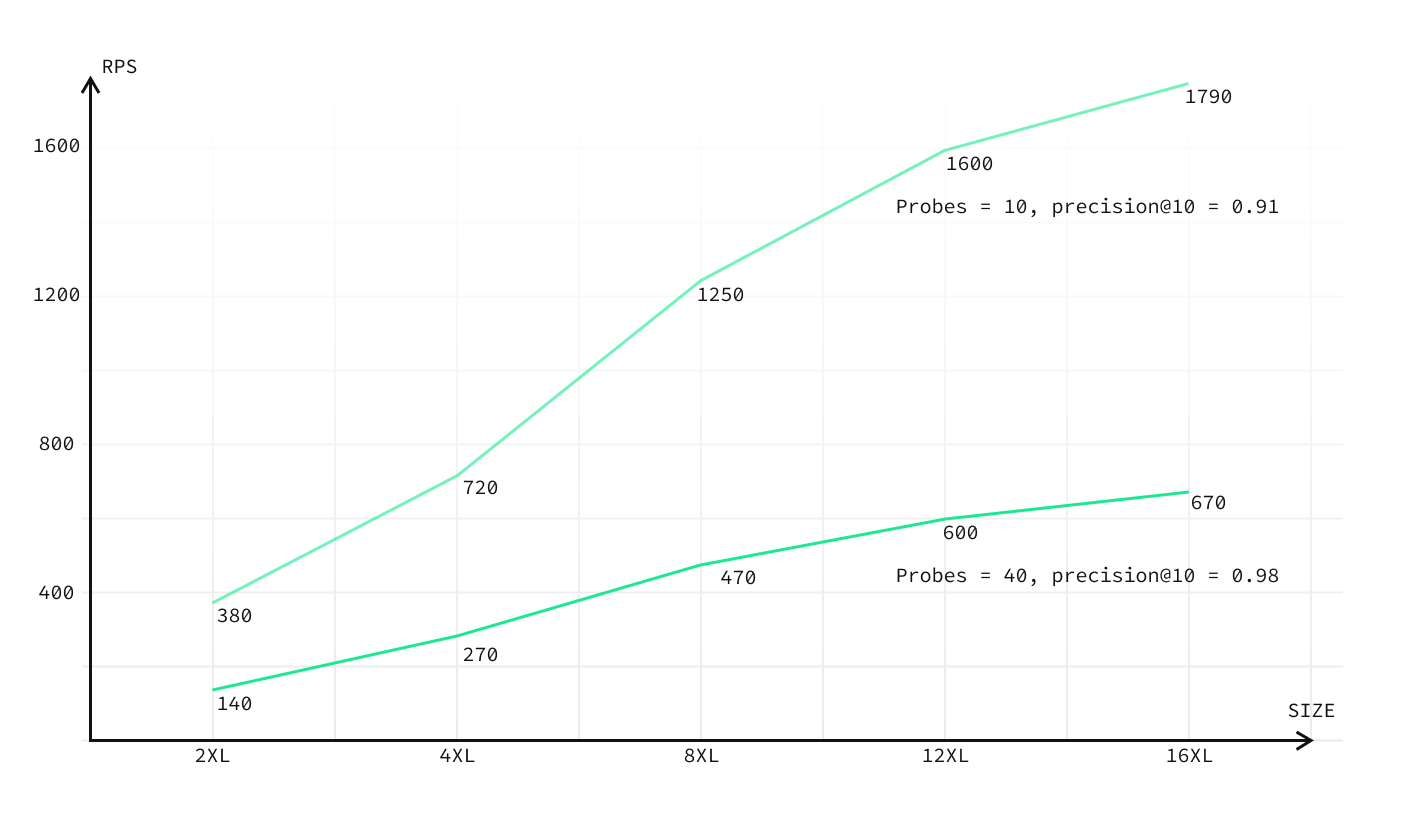

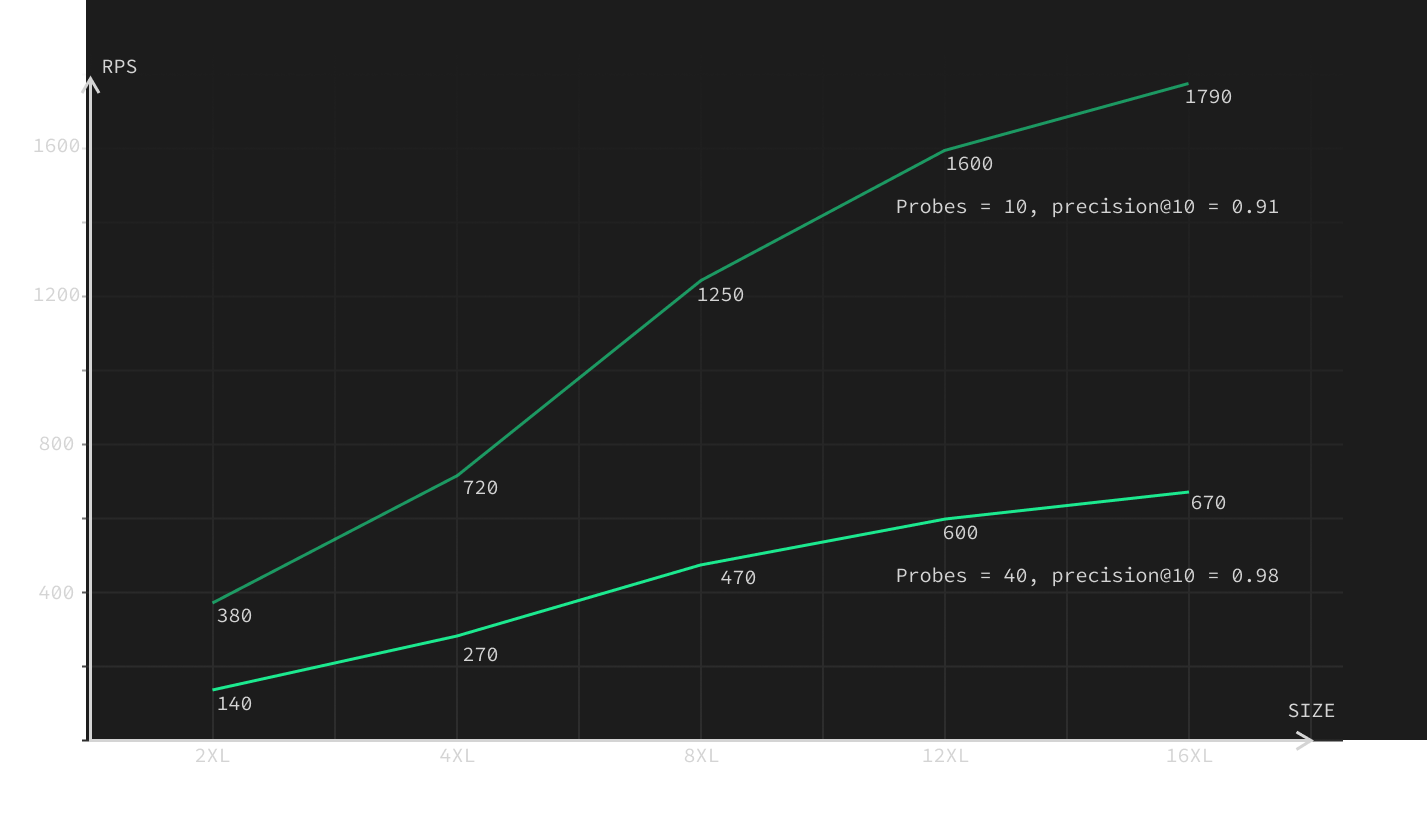

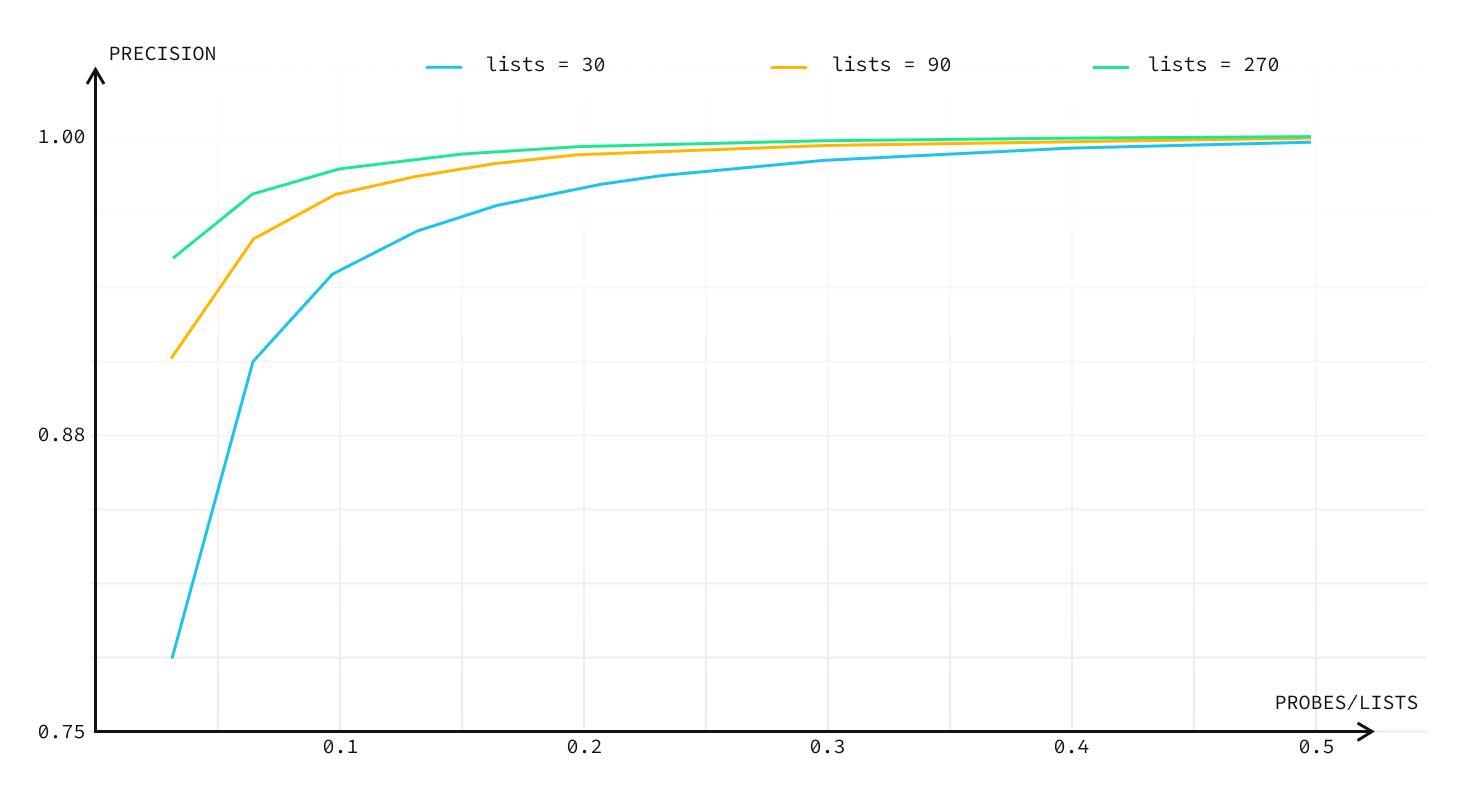

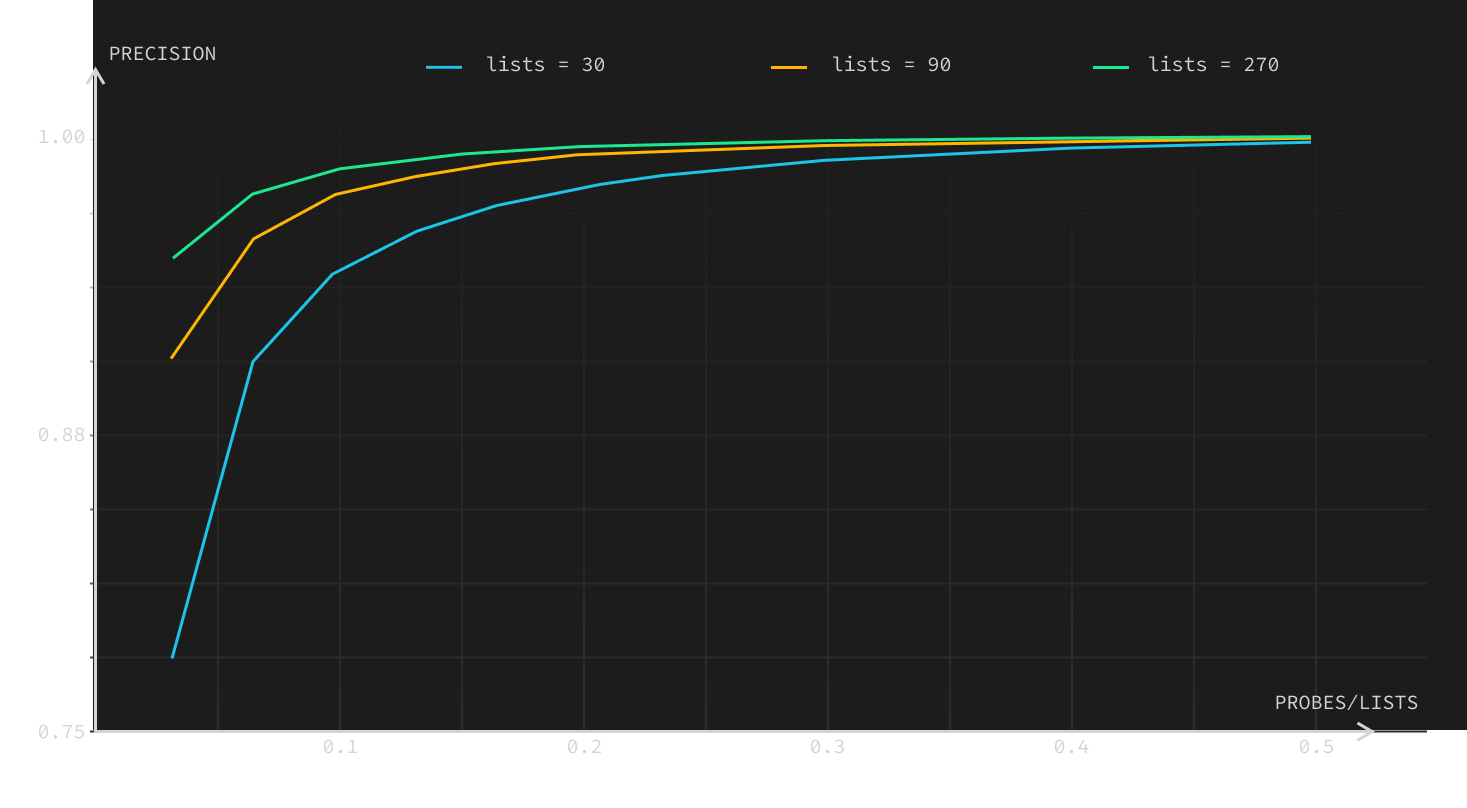

Indexes used for approximate vector similarity search in pgvector divides a dataset into partitions. The number of these partitions is defined by the lists constant. The probes controls how many lists are going to be searched during a query.

The values of lists and probes directly affect precision and requests per second (RPS).

- Higher

listsmeans an index will be built slower, but you can achieve better RPS and precision. - Higher

probesmeans that select queries will be slower, but you can achieve better precision. listsandprobesare not independent. Higherlistsmeans that you will have to use higherprobesto achieve the same precision.

You can find more examples of how lists and probes constants affect precision and RPS in pgvector 0.4.0 performance blogpost.

Performance Tips when using indexes#

First, a few generic tips which you can pick and choose from:

- The Supabase managed platform will automatically optimize Postgres configs for you based on your compute addon. But if you self-host, consider adjusting your Postgres config based on RAM & CPU cores. See example optimizations for more details.

- Prefer

inner-producttoL2orCosinedistances if your vectors are normalized (liketext-embedding-ada-002). If embeddings are not normalized,Cosinedistance should give the best results with an index. - Pre-warm your database. Implement the warm-up technique before transitioning to production or running benchmarks.

- Execute 10,000 to 50,000 "warm-up" queries before each benchmark, matching the number of

probesyou are going to use in production. Additionally, you can execute about 1,000 queries with probes ranging from three to ten times the prod's probes. Both of these help to increase RAM utilization.

- Execute 10,000 to 50,000 "warm-up" queries before each benchmark, matching the number of

- Establish your workload. Increasing the lists constant for the pgvector index can accelerate your queries (at the expense of a slower build). For instance, for benchmarks with 1,000,000 embeddings, we employed a

listsconstant of 2000 (number of vectors / 500) as opposed to the suggested 1000 (number of vectors / 1000). - Benchmark your own specific workloads. Doing this during cache warm-up helps gauge the best value for the

probesconstant, balancing precision with requests per second (RPS).

Going into production#

- Decide if you are going to use indexes or not. You can skip the rest of this guide if you do not use indexes.

- Over-provision RAM during preparation. You can scale down in step

5, but it's better to start with a larger size to get the best results for RAM requirements. (We'd recommend at least 8XL if you're using Supabase.) - Upload your data to the database. If you use the

vecslibrary, it will automatically generate an index with default parameters. - Run a benchmark using randomly generated queries and observe the results. Again, you can use the

vecslibrary with theann-benchmarkstool. Do it with probes set to 10 (default) and then with probes set to 100 or more, so RPS will be lower than 10. - Monitor the RAM usage, and save it as a note for yourself. You would likely want to use a compute add-on in the future that has the same amount of RAM that was used at the moment (both actual RAM usage and RAM used for cache and buffers).

- Scale down your compute add-on to the one that would have the same amount of RAM used at the moment.

- Repeat step 3 to load the data into RAM. You should see RPS increase on subsequent runs, and stop when it no longer increases. Then repeat the benchmark with probes set to a higher value if you haven't already performed it for that compute add-on size.

- Run a benchmark using real queries and observe the results. You can use the

vecslibrary for that as well withann-benchmarkstool. Set probes to 10 (default) and then gradually increase/decrease probes until you see that both precision and RPS match your requirements. - If you want higher RPS and you don't expect to have frequent inserts and reindexing, you can increase

listsconstantly. You have to rebuild the index with a higher lists value and repeat steps 6-7 to find the best combination oflistsandprobesconstants to achieve the best RPS and precision values. Higherlistsmean that index will build slower, but you can achieve better RPS and precision. Higher probes mean that select queries will be slower, but you can achieve better precision.

Useful links#

Don't forget to check out the general Production Checklist to ensure your project is secure, performant, and will remain available for your users.

You can look at our Choosing Compute Add-on guide to get a basic understanding of how much compute you might need for your workload.

Or take a look at our pgvector 0.4.0 performance blog post to see what pgvector is capable of and how the above technique can be used to achieve the best results.